In this post you will find K means clustering example with word2vec in python code. Word2Vec is one of the popular methods in language modeling and feature learning techniques in natural language processing (NLP). This method is used to create word embeddings in machine learning whenever we need vector representation of data.

For example in data clustering algorithms instead of bag of words (BOW) model we can use Word2Vec. The advantage of using Word2Vec is that it can capture the distance between individual words.

The example in this post will demonstrate how to use results of Word2Vec word embeddings in clustering algorithms. For this, Word2Vec model will be feeded into several K means clustering algorithms from NLTK and Scikit-learn libraries.

Here we will do clustering at word level. Our clusters will be groups of words. In case we need to cluster at sentence or paragraph level, here is the link that showing how to move from word level to sentence/paragraph level:

Text Clustering with Word Embedding in Machine Learning

There is also doc2vec word embedding model that is based on word2vec. doc2vec is created for embedding sentence/paragraph/document. Here is the link how to use doc2vec word embedding in machine learning:

Text Clustering with doc2vec Word Embedding Machine Learning Model

Getting Word2vec

Using word2vec from python library gensim is simple and well described in tutorials and on the web [3], [4], [5]. Here we just look at basic example. For the input we use the sequence of sentences hard-coded in the script.

from gensim.models import Word2Vec

sentences = [['this', 'is', 'the', 'good', 'machine', 'learning', 'book'],

['this', 'is', 'another', 'book'],

['one', 'more', 'book'],

['this', 'is', 'the', 'new', 'post'],

['this', 'is', 'about', 'machine', 'learning', 'post'],

['and', 'this', 'is', 'the', 'last', 'post']

model = Word2Vec(sentences, min_count=1)

Now we have model with words embedded. We can query model for similar words like below or ask to represent word as vector:

print (model.similarity('this', 'is'))

print (model.similarity('post', 'book'))

#output -0.0198180344218

#output -0.079446731287

print (model.most_similar(positive=['machine'], negative=[], topn=2))

#output: [('new', 0.24608060717582703), ('is', 0.06899910420179367)]

print (model['the'])

#output [-0.00217354 -0.00237131 0.00296396 ..., 0.00138597 0.00291924 0.00409528]

To get vocabulary or the number of words in vocabulary:

print (list(model.vocab)) print (len(list(model.vocab)))

This will produce: [‘good’, ‘this’, ‘post’, ‘another’, ‘learning’, ‘last’, ‘the’, ‘and’, ‘more’, ‘new’, ‘is’, ‘one’, ‘about’, ‘machine’, ‘book’]

Now we will feed word embeddings into clustering algorithm such as k Means which is one of the most popular unsupervised learning algorithms for finding interesting segments in the data. It can be used for separating customers into groups, combining documents into topics and for many other applications.

You will find below two k means clustering examples.

K Means Clustering with NLTK Library

Our first example is using k means algorithm from NLTK library.

To use word embeddings word2vec in machine learning clustering algorithms we initiate X as below:

X = model[model.vocab]

Now we can plug our X data into clustering algorithms.

from nltk.cluster import KMeansClusterer import nltk NUM_CLUSTERS=3 kclusterer = KMeansClusterer(NUM_CLUSTERS, distance=nltk.cluster.util.cosine_distance, repeats=25) assigned_clusters = kclusterer.cluster(X, assign_clusters=True) print (assigned_clusters) # output: [0, 2, 1, 2, 2, 1, 2, 2, 0, 1, 0, 1, 2, 1, 2]

In the python code above there are several options for the distance as below:

nltk.cluster.util.cosine_distance(u, v)

Returns 1 minus the cosine of the angle between vectors v and u. This is equal to 1 – (u.v / |u||v|).

nltk.cluster.util.euclidean_distance(u, v)

Returns the euclidean distance between vectors u and v. This is equivalent to the length of the vector (u – v).

Here we use cosine distance to cluster our data.

After we got cluster results we can associate each word with the cluster that it got assigned to:

words = list(model.vocab)

for i, word in enumerate(words):

print (word + ":" + str(assigned_clusters[i]))

Here is the output for the above:

good:0

this:2

post:1

another:2

learning:2

last:1

the:2

and:2

more:0

new:1

is:0

one:1

about:2

machine:1

book:2

K Means Clustering with Scikit-learn Library

This example is based on k means from scikit-learn library.

from sklearn import cluster

from sklearn import metrics

kmeans = cluster.KMeans(n_clusters=NUM_CLUSTERS)

kmeans.fit(X)

labels = kmeans.labels_

centroids = kmeans.cluster_centers_

print ("Cluster id labels for inputted data")

print (labels)

print ("Centroids data")

print (centroids)

print ("Score (Opposite of the value of X on the K-means objective which is Sum of distances of samples to their closest cluster center):")

print (kmeans.score(X))

silhouette_score = metrics.silhouette_score(X, labels, metric='euclidean')

print ("Silhouette_score: ")

print (silhouette_score)

In this example we also got some useful metrics to estimate clustering performance.

Output:

Cluster id labels for inputted data

[0 1 1 ..., 1 2 2]

Centroids data

[[ -3.82586889e-04 1.39791325e-03 -2.13839358e-03 ..., -8.68172920e-04

-1.23599875e-03 1.80053393e-03]

[ -3.11774168e-04 -1.63297475e-03 1.76715955e-03 ..., -1.43826099e-03

1.22940990e-03 1.06353679e-03]

[ 1.91571176e-04 6.40696089e-04 1.38173658e-03 ..., -3.26442620e-03

-1.08828480e-03 -9.43636987e-05]]

Score (Opposite of the value of X on the K-means objective which is Sum of distances of samples to their closest cluster center):

-0.00894730946094

Silhouette_score:

0.0427737

Here is the full python code of the script.

# -*- coding: utf-8 -*-

from gensim.models import Word2Vec

from nltk.cluster import KMeansClusterer

import nltk

from sklearn import cluster

from sklearn import metrics

# training data

sentences = [['this', 'is', 'the', 'good', 'machine', 'learning', 'book'],

['this', 'is', 'another', 'book'],

['one', 'more', 'book'],

['this', 'is', 'the', 'new', 'post'],

['this', 'is', 'about', 'machine', 'learning', 'post'],

['and', 'this', 'is', 'the', 'last', 'post']]

# training model

model = Word2Vec(sentences, min_count=1)

# get vector data

X = model[model.vocab]

print (X)

print (model.similarity('this', 'is'))

print (model.similarity('post', 'book'))

print (model.most_similar(positive=['machine'], negative=[], topn=2))

print (model['the'])

print (list(model.vocab))

print (len(list(model.vocab)))

NUM_CLUSTERS=3

kclusterer = KMeansClusterer(NUM_CLUSTERS, distance=nltk.cluster.util.cosine_distance, repeats=25)

assigned_clusters = kclusterer.cluster(X, assign_clusters=True)

print (assigned_clusters)

words = list(model.vocab)

for i, word in enumerate(words):

print (word + ":" + str(assigned_clusters[i]))

kmeans = cluster.KMeans(n_clusters=NUM_CLUSTERS)

kmeans.fit(X)

labels = kmeans.labels_

centroids = kmeans.cluster_centers_

print ("Cluster id labels for inputted data")

print (labels)

print ("Centroids data")

print (centroids)

print ("Score (Opposite of the value of X on the K-means objective which is Sum of distances of samples to their closest cluster center):")

print (kmeans.score(X))

silhouette_score = metrics.silhouette_score(X, labels, metric='euclidean')

print ("Silhouette_score: ")

print (silhouette_score)

References

1. Word embedding

2. Comparative study of word embedding methods in topic segmentation

3. models.word2vec – Deep learning with word2vec

4. Word2vec Tutorial

5. How to Develop Word Embeddings in Python with Gensim

6. nltk.cluster package

Hi, this is quite an interesting example. I have tried to run your code but when I reach `assigned_clusters = kclusterer.cluster(X, assign_clusters=True)` I get the error `ValueError: math domain error`. I am not sure how we can get different result. I am using python 3.6

Hi Valerio,

I was using python 3.5 on Windows but not sure if this can make difference or not.

Was this issue solved ? Because I’m getting the same error. Please reply the solution if it got correct.

Convert X.dtype to float64 using X=numpy.float64(X) . If you pass X to kclusterer.cluster then it would work.

Is this solution for ‘ValueError: math domain error’ ???

I add X = numpy.float64(X) like this

# training model

model = Word2Vec(sentences, min_count=1,size=300)

# get vector data

X = model.wv

X= numpy.float64(X)

But I got “ValueError: setting an array element with a sequence. ” Error.

I’m not sure how to fix it

Thanks for the nice post. I’d like to suggest a few updates for those using the latest versions:

First, the vector model is now simply called with

X = model.wv

Secondly, Gensim documentation recommends assigning a new name for the model, followed by deleting the model, i.e.:

X = model.wv

del model

This suggestion is of course more useful for larger documents than this example.

Lastly, X = model.wv is a Gensim Word2VecKeyedVectors object. The word vectors needed for input in cluster analysis are now “X.vectors”.

The error previously reported is due to a Gensim Word2VecKeyedVectors object being passed to Sci-kit Learn clustering.

Jnicolich – thanks for the updates. This is very useful.

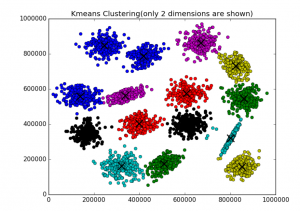

How have you plotted the clustering on a graph?

B – I used regular python matplotlib package for printing clustering. This is from ML Sandbox that I am runnnig: http://intelligentonlinetools.com/cgi-bin/analytics/ml.cgi, and the S1 dataset is available online.

Here are some details on clustering http://intelligentonlinetools.com/blog/2017/07/03/algorithms-metrics-and-online-tool-for-clustering/

Jnicolich – thanks for the updates. This is very useful.

B – I used regular python matplotlib package for printing clustering. This is from ML Sandbox that I am runnnig: http://intelligentonlinetools.com/cgi-bin/analytics/ml.cgi, and the S1 dataset is available online.

Here are some details on clustering http://intelligentonlinetools.com/blog/2017/07/03/algorithms-metrics-and-online-tool-for-clustering/

Thanks for the blog. However, I’m struggling to understand the *sentences* list of lists part. What does it represent? Let’s say I have a list of tweets that I would like to cluster, how can I get word embeddings for each reviews instead of individual words? Thank you in advance for the the response.

Hi Ndeky,

list of lists represents just input list of sentences. Here we are clustering at the word level and all embedding is done within word2vec. For each unique word we are getting the embedding vector.

If instead of individual words, you want cluster at tweet (or sentence) level you would need get vector for each tweet(sentence) by combining embedding vectors for individual words – using averaging or concatenation. I am not sure if library now includes function for this.

Here is how you can get embeddings (just for individual words):

model = Word2Vec(sentences, min_count=1, size = 300)

# get vector data

X = model[model.wv.vocab]

print (X)

Hope this help you.

is it possible to get different values as results? I ran this and the code worked, but my numbers were different for some reason. has the word2vec algorithm changed?

I think k means is using random initialization of clusters this might be why the results are different.

For reference:

The performance of K-means strongly depends on the initial guess of partition. Several random initialization methods for K-means have been developed. … Random seed randomly selects K in- stances (seed points), and assigns each of the other instances to the cluster with the nearest seed point.

A Deterministic Method for Initializing K-means Clustering – Electrical …

http://www.ece.neu.edu/faculty/jdy/papers/init-kmeans.pdf